|

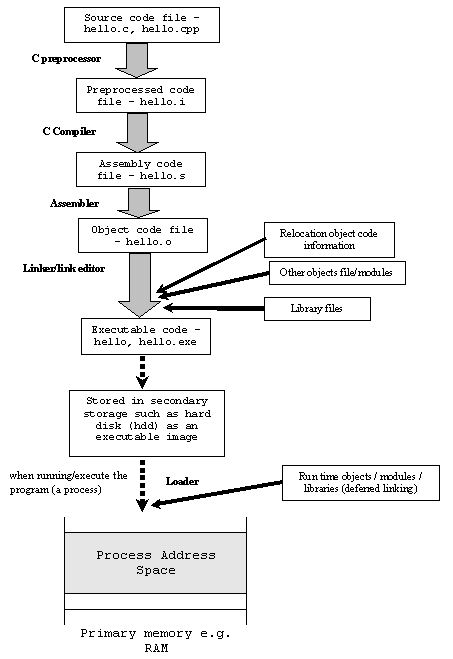

Compiler - Wikipedia, the free encyclopedia. A compiler is a computer program (or a set of programs) that transforms source code written in a programming language (the source language) into another computer language (the target language), with the latter often having a binary form known as object code. If the compiled program can run on a computer whose CPU or operating system is different from the one on which the compiler runs, the compiler is known as a cross- compiler. More generally, compilers are a specific type of translator. While all programs that take a set of programming specifications and translate them, i. Write the different steps involved in executing a C program and. Steps involved in executing c program. The OOA should clearly show the steps used to. A compiler is a computer program. The disadvantage of compiling in a single pass is that it is not possible to perform many of the sophisticated optimizations. Steps Involved In Editing Compiling And Running A C Program. STEPS INVOLVED IN PROCESSING OF DATA IN RESEARCH METHODOLOGY Introduction After the. How to Compile a Program in Linux. Compiling and replacing critical system components can cause problems if you recompile and. CA.explain the steps involved in compiling a C program. Glassdoor uses cookies to improve your site experience. By continuing, you agree to our use of cookies. C Programming/Compiling. When you begin compiling your code, a special program called the preprocessor scans the. A program that translates between high- level languages is usually called a source- to- source compiler or transpiler. A language rewriter is usually a program that translates the form of expressions without a change of language. The term compiler- compiler is sometimes used to refer to a parser generator, a tool often used to help create the lexer and parser. A compiler is likely to perform many or all of the following operations: lexical analysis, preprocessing, parsing, semantic analysis (syntax- directed translation), code generation, and code optimization. Program faults caused by incorrect compiler behavior can be very difficult to track down and work around; therefore, compiler implementors invest significant effort to ensure compiler correctness. History. Although the first high level language is nearly as old as the first computer, the limited memory capacity of early computers led to substantial technical challenges when the first compilers were designed. The first compiler was written by Grace Hopper, in 1. A- 0 programming language; the A- 0 functioned more as a loader or linker than the modern notion of a compiler. The first autocode and its compiler were developed by Alick Glennie in 1. Mark 1 computer at the University of Manchester and is considered by some to be the first compiled programming language. COBOL was an early language to be compiled on multiple architectures, in 1. Because of the expanding functionality supported by newer programming languages and the increasing complexity of computer architectures, compilers have become more complex. Early compilers were written in assembly language. The first self- hosting compiler .

Building a self- hosting compiler is a bootstrapping problem. Before the development of FORTRAN, the first high- level language, in the 1. While assembly language produces more abstraction than machine code on the same architecture, just as with machine code, it has to be modified or rewritten if the program is to be executed on different computer hardware architecture. With the advent of high- level programming languages that followed FORTRAN, such as COBOL, C, and BASIC, programmers could write machine- independent source programs. A compiler translates the high- level source programs into target programs in machine languages for the specific hardware. I'm assuming this question is about compiling Java to Java. What are the steps in the Java compilation process? Many new users find it difficult to compiling programs in Linux. Usually following steps are involved: a. Compiling a PL/I Program. To compile a PL/I program under the DML precompiler. The following figure illustrates the steps involved in compiling a PL/I program. Once the target program is generated, the user can execute the program. Compilers in education. A well- documented example is Niklaus Wirth's PL/0 compiler, which Wirth used to teach compiler construction in the 1. This is known as the target platform. A native or hosted compiler is one which output is intended to directly run on the same type of computer and operating system that the compiler itself runs on. The output of a cross compiler is designed to run on a different platform.

Cross compilers are often used when developing software for embedded systems that are not intended to support a software development environment. The output of a compiler that produces code for a virtual machine (VM) may or may not be executed on the same platform as the compiler that produced it. For this reason such compilers are not usually classified as native or cross compilers. The lower level language that is the target of a compiler may itself be a high- level programming language. C, often viewed as some sort of portable assembler, can also be the target language of a compiler. E. g.: Cfront, the original compiler for C++ used C as target language. The C created by such a compiler is usually not intended to be read and maintained by humans. So indent style and pretty C intermediate code are irrelevant. Some features of C turn it into a good target language. E. g.: C code with #line directives can be generated to support debugging of the original source. Compiled versus interpreted languages. However, in practice there is rarely anything about a language that requires it to be exclusively compiled or exclusively interpreted, although it is possible to design languages that rely on re- interpretation at run time. The categorization usually reflects the most popular or widespread implementations of a language . It only hides it from the user and makes it gradual. Even though an interpreter can itself be interpreted, a directly executed program is needed somewhere at the bottom of the stack (see machine language). Modern trends toward just- in- time compilation and bytecode interpretation at times blur the traditional categorizations of compilers and interpreters. Some language specifications spell out that implementations must include a compilation facility; for example, Common Lisp. However, there is nothing inherent in the definition of Common Lisp that stops it from being interpreted. Other languages have features that are very easy to implement in an interpreter, but make writing a compiler much harder; for example, APL, SNOBOL4, and many scripting languages allow programs to construct arbitrary source code at runtime with regular string operations, and then execute that code by passing it to a special evaluation function.

To implement these features in a compiled language, programs must usually be shipped with a runtime library that includes a version of the compiler itself. Special type of compilers. For example, an automatic parallelizing compiler will frequently take in a high level language program as an input and then transform the code and annotate it with parallel code annotations (e. Open. MP) or language constructs (e. Fortran's DOALL statements). Bytecode compilers that compile to assembly language of a theoretical machine, like some Prolog implementations. Just- in- time compiler (JIT compiler) is the last part of a multi- pass compiler chain in which some compilation stages are deferred to run- time. Examples are implemented in Smalltalk, Java and Microsoft . NET's Common Intermediate Language (CIL) systems. This bytecode is then compiled using a JIT compiler to native machine code just when the execution of the program is required. The output of the compilation is only an interconnection of transistors or lookup tables. An example of hardware compiler is XST. Similar tools are available from Altera. A compiler verifies code syntax, generates efficient object code, performs run- time organization, and formats the output according to assembler and linker conventions. In the early days, the approach taken to compiler design used to be directly affected by the complexity of the processing, the experience of the person(s) designing it, and the resources available. A compiler for a relatively simple language written by one person might be a single, monolithic piece of software. When the source language is large and complex, and high quality output is required, the design may be split into a number of relatively independent phases. Having separate phases means development can be parceled up into small parts and given to different people. It also becomes much easier to replace a single phase by an improved one, or to insert new phases later (e. The division of the compilation processes into phases was championed by the Production Quality Compiler- Compiler Project (PQCC) at Carnegie Mellon University. This project introduced the terms front end, middle end, and back end. All but the smallest of compilers have more than two phases. The point at which these ends meet is not always clearly defined. One- pass versus multi- pass compilers. Compiling involves performing lots of work and early computers did not have enough memory to contain one program that did all of this work. So compilers were split up into smaller programs which each made a pass over the source (or some representation of it) performing some of the required analysis and translations. The ability to compile in a single pass has classically been seen as a benefit because it simplifies the job of writing a compiler and one- pass compilers generally perform compilations faster than multi- pass compilers. Thus, partly driven by the resource limitations of early systems, many early languages were specifically designed so that they could be compiled in a single pass (e. Pascal). In some cases the design of a language feature may require a compiler to perform more than one pass over the source. For instance, consider a declaration appearing on line 2. In this case, the first pass needs to gather information about declarations appearing after statements that they affect, with the actual translation happening during a subsequent pass. The disadvantage of compiling in a single pass is that it is not possible to perform many of the sophisticated optimizations needed to generate high quality code. It can be difficult to count exactly how many passes an optimizing compiler makes. For instance, different phases of optimization may analyse one expression many times but only analyse another expression once. Splitting a compiler up into small programs is a technique used by researchers interested in producing provably correct compilers. Proving the correctness of a set of small programs often requires less effort than proving the correctness of a larger, single, equivalent program. Three phases compiler structure. These phases are named after the Production Quality Compiler- Compiler Project phases mentioned before. The front end verifies syntax and semantics according to a specific source language.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

October 2017

Categories |

RSS Feed

RSS Feed